Day 3 - Part 1 - Introduction to AI#

🤖 Artificial Intelligence (AI)#

“The science and engineering of making intelligent machines.” — John McCarthy, 1956

🧠 What is AI?#

Artificial Intelligence refers to machines mimicking human intelligence to perform tasks such as reasoning, learning, problem-solving, and decision-making.

📅 A Brief History#

🗓️ 1950s: AI formally began as an academic field.

Early systems were rule-based (Symbolic AI), using explicit instructions rather than learning from data.

🗓️ 1980s-90s: Rise of Expert Systems that encoded human expertise.

🗓️ 2000s: Shift towards data-driven approaches

🗓️ 2010s: Explosion of Machine Learning and Deep Learning due to:

Increased data availability

Improved computing power (GPUs)

Advances in algorithms

🗓️ 2020s: AI is now pervasive in applications like:

🗣️ Natural language processing (NLP)

🖼️ Computer vision

🚗 Autonomous systems

Present: AI continues to evolve with breakthroughs in reinforcement learning, transformers, and large language models (LLMs).

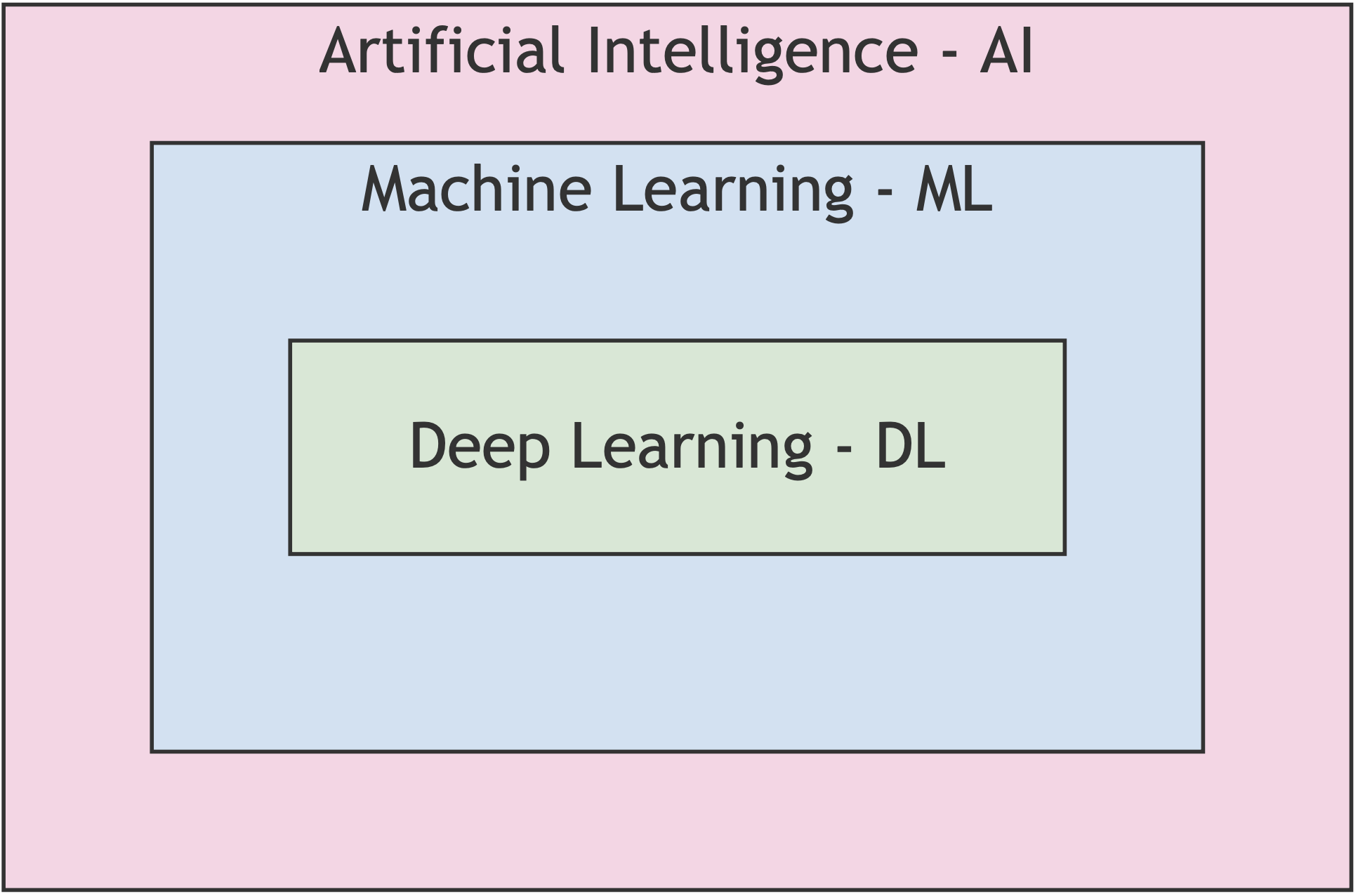

🌐 Scope of AI#

AI is the broadest umbrella that includes:

✅ Non-learning systems

🟢 Expert systems

🟢 Decision trees

🟢 Rule-based logic (Symbolic AI)

✅ Machine Learning (ML)

🟢 Deep Learning (DL)

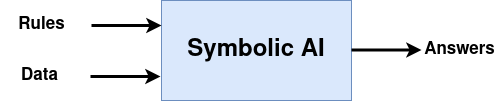

🎯 Symbolic AI#

The first AI chess programs used hard-coded rules.

Great for well-defined problems like chess or solving logic puzzles.

Expert Systems that encoded rules and knowledge from human experts were used for medical diagnosis, chemistry expert system for molecular structure prediction, etc.

Limitations:

Could not scale to complex tasks like:

🧍♂️ Computer vision

🗣️ Natural language processing

These challenges motivated the rise of Machine Learning.

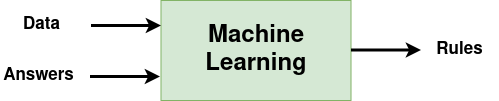

📘 Machine Learning (ML)#

“Machine learning arises from this question: could a computer go beyond ‘what we know how to order it to perform’ and learn on its own how to perform a specified task?”

🤖 What is ML?#

Machine Learning is a subset of AI that focuses on algorithms that allow computers to learn from data and improve performance on a task without being explicitly programmed.

📌 Key Characteristics#

Learns patterns and rules from data.

Improves performance through experience.

Used in a wide range of applications:

📧 Email spam filtering

📈 Stock prediction

🏥 Disease diagnosis

🗣️ Speech and language recognition

🖼️ Image classification

🚗 Autonomous driving

🤖 Chatbots and virtual assistants

Not just about data: ML also involves algorithms and models that can generalize from examples.

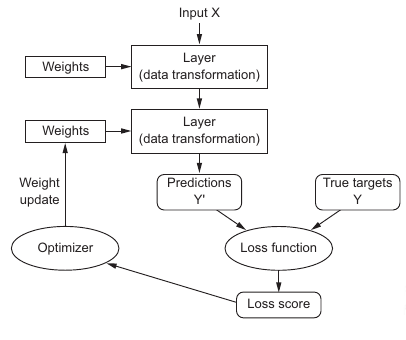

🧠 How It Works#

At its core, ML involves:

Input Data – examples or observations.

Learning Algorithm – identifies patterns or rules.

Model – generalizes from the data.

Loss Function – measures how well the model performs.

Guides the learning process by quantifying errors.

Used to adjust model parameters.

Training – iteratively adjusts the model to minimize the loss function.

Uses techniques like gradient descent.

May involve validation to prevent overfitting.

Prediction/Decision – applies the learned model to new data.

🧠 Types of Machine Learning#

Machine Learning can be categorized into several main types based on how models learn from data:

1. Supervised Learning#

Definition: The model learns from labeled input-output pairs.

Goal: Predict an output (label) from input data.

Examples:

Classification: Spam detection, disease diagnosis

Regression: House price prediction, temperature forecasting

Common Algorithms:

Linear Regression

Logistic Regression

Decision Trees

Support Vector Machines (SVM)

Neural Networks

2. Unsupervised Learning#

Definition: The model learns patterns or structure from unlabeled data.

Goal: Discover hidden patterns or groupings.

Examples:

Clustering: Customer segmentation, gene grouping

Dimensionality Reduction: PCA, t-SNE

Anomaly Detection: such as one-class SVM, Isolation Forest, k-NN Outlier Detection

Common Algorithms:

k-Means

DBSCAN

Hierarchical Clustering

Autoencoders

3. Semi-Supervised Learning#

Definition: Uses a small amount of labeled data and a large amount of unlabeled data.

Goal: Improve performance when labeled data is limited.

Examples:

Text classification with limited labeled documents

Image recognition with few annotated samples

Techniques:

Pseudo-labeling

Consistency regularization

4. Self-Supervised Learning ✅ (Modern Type)#

Definition: Learns from raw, unlabeled data by generating labels from the data itself.

Goal: Learn general representations by solving pretext tasks.

Examples:

Language Models: GPT (predict next token), BERT (mask prediction)

Vision: MAE, SimCLR, DINO

Multimodal: CLIP (image-text alignment)

Pretext Tasks:

Masked token prediction

Contrastive learning

Temporal prediction

Used In:

LLMs

Vision foundation models

Audio and speech models

5. Reinforcement Learning#

Definition: The model learns by interacting with an environment and receiving rewards or penalties.

Goal: Learn a policy that maximizes cumulative reward.

Examples:

Game playing (Chess, Go, Atari)

Robotics

Autonomous vehicles

Key Concepts:

Agent, Environment, Action, Reward, Policy

Popular Algorithms:

Q-Learning

Deep Q Networks (DQN)

Proximal Policy Optimization (PPO)

🔁 Summary Table#

Type |

Label Requirement |

Learns From |

Example Models |

|---|---|---|---|

Supervised |

Labeled data |

Input-output pairs |

Linear regression, SVM |

Unsupervised |

No labels |

Patterns in data |

k-Means, PCA |

Semi-Supervised |

Few labels |

Mixed data |

Pseudo-labeling |

Self-Supervised |

No manual labels |

Generated tasks |

GPT, BERT, CLIP |

Reinforcement Learning |

Rewards |

Trial & error |

AlphaGo, DQN, PPO |

🚀 Why ML Matters#

ML bridges the gap between explicit programming (as in Symbolic AI) and adaptive behavior, enabling systems to scale and tackle real-world complexity — such as recognizing faces, translating languages, or predicting trends.

🧠 Deep Learning (DL)#

“Deep Learning allows computational models that are composed of multiple processing layers to learn representations of data with multiple levels of abstraction.” — Yann LeCun, Yoshua Bengio, Geoffrey Hinton

🔍 What is DL?#

Deep Learning is a subset of Machine Learning that uses artificial neural networks with many layers to model complex patterns in large amounts of data.

It is particularly powerful for tasks where manual feature extraction is difficult or impossible.

🧬 Why “Deep”?#

The term “deep” refers to the many layers in a neural network — each layer progressively extracts higher-level features from the raw input.

Example (image recognition):

🟪 Pixels → 🟥 Edges → 🟧 Shapes → 🟨 Objects

📚 Key Applications#

🖼️ Image classification and object detection

🗣️ Speech recognition and synthesis

🌍 Language translation (NLP)

🧬 Drug discovery and genomics

🚗 Autonomous driving

🧪 Common Architectures#

Convolutional Neural Networks (CNNs) – for images

Recurrent Neural Networks (RNNs) and Transformers – for sequences like text and speech

Generative Adversarial Networks (GANs) – for image generation

Autoencoders – for representation learning and anomaly detection

🚧 Challenges#

Requires large labeled datasets

Computationally intensive (often needs GPUs/TPUs)

Less interpretable than traditional ML models

Risk of overfitting if not properly regularized

🚀 Why DL Matters#

Deep Learning has revolutionized AI by enabling systems to learn directly from raw data — making breakthroughs in areas where human-designed features failed or plateaued.